Introduction

Cameras are incredible tools that allow us to capture and make sense of the visible world around us. Most mobile phones made today come with a camera, meaning that more people than ever are becoming familiar with camera software and taking images. But one of the largest applications of cameras is for scientific imaging, in order to take images for scientific research. For these applications, we need carefully manufactured scientific cameras.

What Is Light?

The most important aspect of a scientific camera is the ability to be quantitative, measuring specific quantities of something. In this case, the camera is measuring light, and the most basic measurable unit of light is the photon.

Photons are particles that make up all types of electromagnetic radiation, including visible light and radio waves, as seen in Figure 1. One of the most important part of this spectrum for imaging is visible light, which ranges from 380-750 nanometers, as seen in the insert of Figure 1.

As microscopes typically use visible, infrared (IR) or ultraviolet (UV) light in the form of a lamp or laser, a scientific camera is essentially a device that needs to detect and count photons using a sensor.

Sensors

A sensor for a scientific camera needs to be able to detect and count photons, and then convert them into electrical signals. This involves multiple steps, the first of which involves detecting photons. Scientific cameras use photodetectors, where photons that hit the photodetector are converted into an equivalent amount of electrons. These photodetectors are typically made of a very thin layer of silicon. When photons from a light source hit this layer, they are converted into electrons. A layout of such a sensor can be seen in Figure 2.

Sensor Pixels

However, having just one block of silicon would mean detection was possible, but not localization. By separating the silicon layer into a grid of many tiny squares, photons can be both detected and localized. These tiny squares are referred to as pixels, and technology has developed to the point where you can fit millions of them onto a sensor. When a camera advertises as having 1 megapixel, this means the sensor is an array of one million pixels, a 1000×1000 grid.

In order to fit more pixels onto sensors, pixels have become very small, but as there are millions of pixels the sensors are still quite large in comparison. The Prime BSI camera has 6.5 µm square pixels (42.25 µm2 area) arranged in an array of 2048 x 2048 pixels (4.2 million pixels), resulting in a sensor size of 13.3 x 13.3 mm and a diagonal of 18.8 mm. Meanwhile, the Prime 95B has the same 18.8 mm diagonal sensor, but with 11 µm square pixels (area of 121 µm2) in a 1200 x 1200 array (1.4 million pixels). The Prime 95B, therefore, has fewer sensor pixels (decreasing the maximum imaging resolution), but each pixel is 3x larger in the area (increasing the sensitivity).

Making sensor pixels smaller allows for more to fit on a sensor, but if pixels become too small they won’t be able to detect as many photons, which introduces the concept of compromise in camera design between resolution and sensitivity. One option to consider is binning, which is discussed in a separate article. Due to these reasons the overall sensor size, pixel size, and the number of pixels are carefully optimized in camera design. When deciding which scientific camera to get, pixel size is a vital metric that is important to consider.

Generating An Image

When exposed to light, each pixel of the sensor detects how many photons come into contact with it. This gives a map of values, where each pixel has detected a certain number of photons. This array of measurements is known as a bitmap and is the basis of all scientific images taken with cameras, dependant on the signal level of the experiment and application. The bitmap is accompanied by the metadata, which contains all the other information about the image, such as the time it was taken, camera settings, imaging software settings, and microscope hardware information.

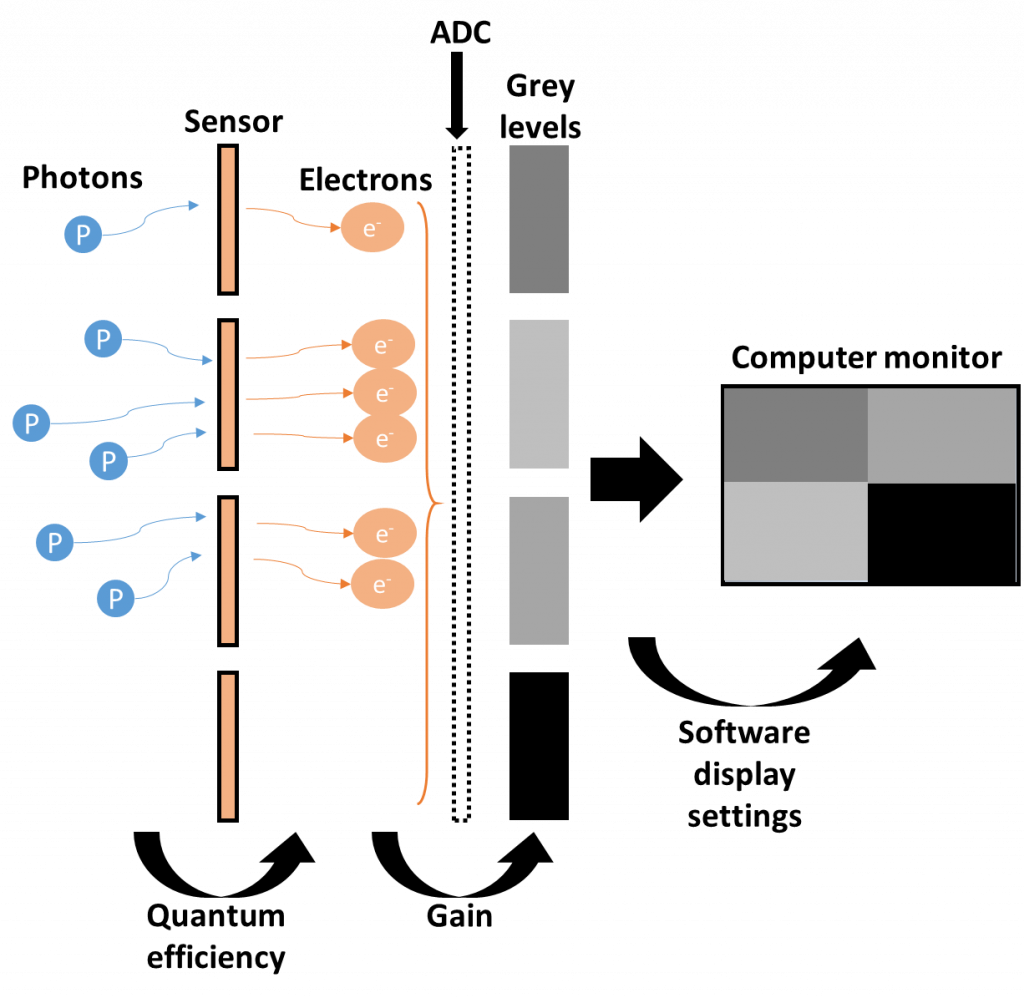

The following are the processes involved in generating an image from light using a scientific camera:

- Photons hit the sensor are converted into electrons (called photoelectrons).

- The rate of this conversion is known as quantum efficiency (QE). With a QE of 50%, only half of the photons will be converted to electrons and information will be lost.

- The generated electrons are stored in a well in each pixel, giving a quantitative count of electrons per pixel

- The maximum number of electrons that can be stored in the well is known as the full well capacity, which determines the dynamic range of the sensor.

- The electron charge in each pixel’s well is amplified into a readable voltage, this is the analogue signal.

- The analogue signal is converted from a voltage into a digital signal with an analogue to digital converter (ADC). This arbitrary digital signal is known as a grey level, as most scientific cameras are monochrome.

- The rate of this conversion is known as gain. With a gain of 1.5, 100 electrons are converted to 150 grey levels.

- The bit-depth of the camera determines how many grey levels are available for the signal to be converted into, a 12-bit camera has 4096 (212) available grey levels, a 16-bit camera has 65,536 (216).

- The bit depth also determines the full well capacity and therefore the dynamic range.

- The map of grey levels is displayed on the computer monitor in the imaging software as an image.

- The generated image depends on the software settings, such as brightness, contrast, etc.

These steps are visualized in Figure 4.

In this manner, photons are converted to electrons, which are converted into a digital signal and displayed as an image. These main stages of imaging with a scientific camera are consistent across all modern camera technologies, but there are several different types of sensor architecture and design.

Types Of Camera Sensor

Camera sensors are at the heart of the camera and have been subject to numerous different iterations over the years. Researchers are constantly on the lookout for better sensors which can improve their images, bringing better resolution, sensitivity, field of view, and speed. The three main camera sensor technologies are:

- Charge-coupled device (CCD)

- Electron-multiplied charge-coupled device (EMCCD)

- Complementary metal-oxide-semiconductor (CMOS)

Each of these sensors is discussed in detail in our next article, Camera Sensor Types

Summary

A scientific camera is a vital component of any imaging system. These cameras are designed to quantitatively measure how many photons hit which part of the camera sensor. Photons generate electrons (photoelectrons), which are stored in sensor pixels and converted to a digital signal, which is displayed as an image. This process is optimized at every stage in order to produce the best possible image depending on the signal received.